A student of mine went to conference, then got an email from unknown journal. The student asked me if this was normal and whether the journal was legit. Here’s the process I went through to evaluate the journal and try to help the student.

I googled the journal title. First thing I noticed was the domain name. The publisher's name is not a correctly spelled English word, which either means the publisher is trying to be gimmicky or using a non-English spelling. Neither makes a good first impression.

The sidebar lists journal information, and I see “Year first Published: 2019”. So even if this is a legitimate journal, it has no track record and probably no reputation. And journals are all about reputation.

Nor does the journal info sidebar say anything about the journal being indexed anywhere, like Web of Knowledge or Scopus. Most aspiring legitimate journals at least mention indexing, whether they currently have it, because most authors want their work to be findable in academic searches.

The second paragraph of the journal description has a glaringly obvious typo about the type of research the journal publishes (“-olog” instead of “-ology”). This suggests that someone is not paying attention to the home page. This could be because they are a fly-by-night operation that is only interested in charging authors, or that they’re new or inexperienced and can’t be bothered to proofread.

So this looks like either a scam (likely) or something made by careless amateurs. Neither’s good.

27 November 2019

Accreditation agency lies to support ICE sting operation on foreign students

Accreditation of universities means that they self police and peer review each other to ensure there is a certain level of quality assurance. That they are real educational institutions that are not going to vanish.

I am in shock to learn that one accreditation agency was complicit in a terrible hoax.

The Detroit Free Press is reporting that the US government, via Immigration and Customs Enforcement (ICE), created a fake university, the University of Farmington.

There is a lot going on in this story, and it’s not clear to me who this “sting” was intended to target. The story mentions “recruiters” have been charged, but their role is not clear.

But I am sort of stunned by the arguments the officials running it are making:

But another part of the story says:

So it’s not as though this fake “university” was just a website.

In any case, I am kind of against the whole “They should have known” argument when this fake university was listed as accredited. This is supposed to be the whole point of accreditation: to protect people from scams. Accreditation should protect people from profiteering scams and government entrapment scams.

The accreditation agency that participated in this should be ready to answer a lot of questions. I think this was extremely problematic behaviour on the part of the accrediting agency. It calls into question every other accreditation decision. If a government can warp the accreditation process for a sting, what other ways can “accreditation” be had?

External links

ICE arrests 90 more students at fake university in Michigan

I am in shock to learn that one accreditation agency was complicit in a terrible hoax.

The Detroit Free Press is reporting that the US government, via Immigration and Customs Enforcement (ICE), created a fake university, the University of Farmington.

Attorneys for the students arrested said they were unfairly trapped by the U.S. government since the Department of Homeland Security had said on its website that the university was legitimate. An accreditation agency that was working with the U.S. on its sting operation also listed the university as legitimate.

There is a lot going on in this story, and it’s not clear to me who this “sting” was intended to target. The story mentions “recruiters” have been charged, but their role is not clear.

But I am sort of stunned by the arguments the officials running it are making:

Attorneys for ICE and the Department of Justice maintain that the students should have known it was not a legitimate university because it did not have classes in a physical location. ...

“Their true intent could not be clearer,” Assistant U.S. Attorney Brandon Helms wrote in a sentencing memo this month for Rampeesa, one of the eight recruiters, of the hundreds of students enrolled. “While ‘enrolled’ at the University, one hundred percent of the foreign citizen students never spent a single second in a classroom. If it were truly about obtaining an education, the University would not have been able to attract anyone, because it had no teachers, classes, or educational services.”

But another part of the story says:

The school was located on Northwestern Highway near 13 Mile Road in Farmington Hills and staffed with undercover agents posing as university officials.

So it’s not as though this fake “university” was just a website.

In any case, I am kind of against the whole “They should have known” argument when this fake university was listed as accredited. This is supposed to be the whole point of accreditation: to protect people from scams. Accreditation should protect people from profiteering scams and government entrapment scams.

The accreditation agency that participated in this should be ready to answer a lot of questions. I think this was extremely problematic behaviour on the part of the accrediting agency. It calls into question every other accreditation decision. If a government can warp the accreditation process for a sting, what other ways can “accreditation” be had?

External links

ICE arrests 90 more students at fake university in Michigan

16 November 2019

The crackpot index, biology edition

Amanda Glaze wrote:

Challenge accepted!

The likelihood of someone making revolutionary changes in biology:

The Crackpot Index

Can someone with some free time create a crackpot index for biology like the one that exists in physics?

At the very top of that index there needs to be a section for making arguments that foundational research in a field is completely wrong and using a clip art PowerPoint displaying your own theory based on no research whatsoever as a viable alternative.

Challenge accepted!

The likelihood of someone making revolutionary changes in biology:

- A -5 point starting credit.

- One point for every statement that already addressed in TalkOrigins.

- Two points for every exclamation point!

- Three points for each word in ALL CAPS.

- Five points for saying that “theories” are less likely to be true than “laws” or “facts.”

- Five points for every mention of “entropy” or “Second law of thermodynamics.”

- Ten points for each use of the words “Darwinism” or “Darwinist.”

- Ten points for arguing a discredited individual should be taken seriously because they were “nominated for a Nobel prize.”

- Ten points for saying that “Scientists are the ones who aren’t following the evidence.”

- Ten points for arguing that historically documented events are “statistically impossible.”

- Ten points for saying a current well-established theory is “only a theory.”

- Ten points for calling the current theory “a theory in crisis.”

- Ten points for asserting that evidence only counts if personally witnessed, in real time, by a human being.

- Twenty points for saying that then things that current theories predict should not happen are huge problems for the theory because nobody has seen them happen.

- Twenty points for listing people - whether they have any training or experience in the field in question - who “dissent” from current ideas.

- Twenty points for finishing any claim or argument with the word, “Checkmate!”

- Twenty points for saying, “Darwin was wrong.”

- Twenty points for every other scientific discipline that must be wrong in order for your claims to be correct.

- Twenty points for asking, “Then why are there still monkeys?”

- Thirty points for asking, “Where are the transitional fossils?”

- Thirty points for suggesting that scientists on the brink of death recanted their ideas.

- Thirty points for calling any scientist an “industry shill.”

- Thirty points for claiming any scientist holds a view “just to keep the grant money coming.”

- Forty points for taking quotes of a famous scientist out of context so that it appears to support your position (“quote mining”).

- Fifty points for claiming that your views are being suppressed while writing on a social media platform, blog, or website that is not only discoverable, but lands on the first page of search engine results.

The Crackpot Index

11 November 2019

29 October 2019

Journal reviewing celebration

Recently, I completed a review for journal number fifty. Not fifty articles – fifty different journals I have reviewed for. Some only once and some multiple times.

Since you’re invited to review papers, and I usually say yes whenever possible, the list is kind of an interesting way to see what other people think I know. Mostly crustacean stuff, but I’m pleased that behaviour, evolution, nervous systems, and even internet stuff has worked its way into the list of thing I’ve reviewed.

Acta Ethologica

American Midland Naturalist

Animals

Aquaculture Research

Aquatic Invasions

Behaviour

Behavioural Processes

BioInvasions Records

Biologia

Biological Invasions

Biological Journal of the Linnean Society

BMC Evolutionary Biology

Brain, Behavior and Evolution

Bulletin of Marine Science

Diversity

Drug Discovery Today

Environmental Management

Facets

Fisheries Research

Freshwater Crayfish

Herpetological Natural History

ICES Journal of Marine Science

Invertebrate Reproduction and Development

Journal of Coastal Research

Journal of Crustacean Biology

Journal of Ethology

Journal of Experimental Biology

Journal of Experimental Zoology, Part A: Ecological Genetics and Physiology

Journal of Medical Internet Research

Journal of Natural History

Journal of Neurophysiology

Journal of Visualized Experiments (JoVE)

Knowledge and Management of Aquatic Ecosystems

Management of Biological Invasions

Marine and Freshwater Behaviour and Physiology

Marine Biology Research

Nature Ecology & Evolution

Neuroscience Letters

North-Western Journal of Zoology

Open Journal of Molecular and Integrative Physiology

PeerJ

Physiology and Behavior

PLOS ONE

Proceedings of the Royal Society B: Biological Sciences

Royal Society Open Science

Science Advances

The Biological Bulletin

Zoolgischer Anzeiger

Zoology

Zootaxa

Since you’re invited to review papers, and I usually say yes whenever possible, the list is kind of an interesting way to see what other people think I know. Mostly crustacean stuff, but I’m pleased that behaviour, evolution, nervous systems, and even internet stuff has worked its way into the list of thing I’ve reviewed.

Acta Ethologica

American Midland Naturalist

Animals

Aquaculture Research

Aquatic Invasions

Behaviour

Behavioural Processes

BioInvasions Records

Biologia

Biological Invasions

Biological Journal of the Linnean Society

BMC Evolutionary Biology

Brain, Behavior and Evolution

Bulletin of Marine Science

Diversity

Drug Discovery Today

Environmental Management

Facets

Fisheries Research

Freshwater Crayfish

Herpetological Natural History

ICES Journal of Marine Science

Invertebrate Reproduction and Development

Journal of Coastal Research

Journal of Crustacean Biology

Journal of Ethology

Journal of Experimental Biology

Journal of Experimental Zoology, Part A: Ecological Genetics and Physiology

Journal of Medical Internet Research

Journal of Natural History

Journal of Neurophysiology

Journal of Visualized Experiments (JoVE)

Knowledge and Management of Aquatic Ecosystems

Management of Biological Invasions

Marine and Freshwater Behaviour and Physiology

Marine Biology Research

Nature Ecology & Evolution

Neuroscience Letters

North-Western Journal of Zoology

Open Journal of Molecular and Integrative Physiology

PeerJ

Physiology and Behavior

PLOS ONE

Proceedings of the Royal Society B: Biological Sciences

Royal Society Open Science

Science Advances

The Biological Bulletin

Zoolgischer Anzeiger

Zoology

Zootaxa

24 October 2019

How academic publishing is like a really nice bra

In my jackdaw meanderings around the internet, I stumbled on this thread from Cora Harrington.

She gives many examples, of which I will show just one (emphasis added):

And that isn’t the most expensive one. Cora concludes:

This made me think a lot about academic publishing. Because I am always fascinated by people who say something like undergraduate textbooks or journal subscriptions or article processing fees for open access publishing costs “too much.” When someone says something costs “Too much,” that means they have some notion in their head of what the “right” price is.

But as this example shows, people don’t always have a clear conception of the costs involved. And people complaining about costs sometimes tend to assume that the labour involved is simple, quick, and not worth paying a decent wage for.

This is not to say prices can’t be too high. But at least as far as academic publishing goes, I’ve only seen one attempt to work out what costs are. That is, apart from publishers themselves, who have conflicts of interest in calculating and disclosing costs.

Sometimes I like to look at lace prices on sites like Sophie Hallette. It’s good for giving perspective on how, even if the cost of lingerie was just fabrics (and it’s not because people should be paid for their labor), many items would still be expensive.

She gives many examples, of which I will show just one (emphasis added):

The Chloris reembroidered lace is around $1600/meter.

And that isn’t the most expensive one. Cora concludes:

When someone says “There’s no way x could cost that much,” keep in mind that there are fabrics - literally just the fabrics - that can cost 4 figures per meter.

And the labor - the expertise - involved in knowing how to handle these fabrics is worth many, many times more.

This made me think a lot about academic publishing. Because I am always fascinated by people who say something like undergraduate textbooks or journal subscriptions or article processing fees for open access publishing costs “too much.” When someone says something costs “Too much,” that means they have some notion in their head of what the “right” price is.

But as this example shows, people don’t always have a clear conception of the costs involved. And people complaining about costs sometimes tend to assume that the labour involved is simple, quick, and not worth paying a decent wage for.

This is not to say prices can’t be too high. But at least as far as academic publishing goes, I’ve only seen one attempt to work out what costs are. That is, apart from publishers themselves, who have conflicts of interest in calculating and disclosing costs.

04 October 2019

Who co-authored the most read paper in JCB? Me.

Yes, I know there are all kinds of problems with mystery metrics. Yes, I know this reflects the new paper I co-authored being, well, a new paper with no paywall. Yes, I know that this won’t necessarily reflect the long time impact of the paper.

Still. It feels nice.

Far too often, publishing academic papers feels like shouting into a vacuum. Or the most agonizing of slow burns, where it takes years to know if other people will pick up on what you’ve done. So a little short term feedback like this is pleasant.

01 October 2019

Victoria Braithwaite dies

I was saddened to learn about the untimely death of Victoria Brathwaite. Victoria was a pioneer in research on nociception in non-mammals (fish, specifically), culminating in her book Do Fish Feel Pain? (reviewed here).

I was fortunate to have her as one of the speakers for a symposium I co-organized for Neuroethology in 2012. She was a fine speaker, and I’m sorry I won’t get more chances to interact or learn from her.

External links

Penn State community grieves loss of biologist Victoria Braithwaite

30 September 2019

Climbing the charts

A new preprint of a forthcoming paper I collaborated on dropped in Journal of Crustacean Biology last week.

Today, it’s in the journal’s “most read” list.

I have no idea how the journal calculates this list or how often it updates it. But this makes me happy. Not bad, eh?

The paper is open access, so anyone can read it. So please, help us bump off that Artemia eggs paper off the top position!

Reference

DeLeon H III, Garcia J Jr., Silva DC, Quintanilla O, Faulkes Z, Thomas JM III. Culturing embryonic cells from the parthenogenetic clonal marble crayfish Marmorkrebs Procambarus virginalis Lyko, 2017 (Decapoda: Astacidea: Cambaridae). Journal of Crustacean Biology: in press. https://doi.org/10.1093/jcbiol/ruz063

Today, it’s in the journal’s “most read” list.

I have no idea how the journal calculates this list or how often it updates it. But this makes me happy. Not bad, eh?

The paper is open access, so anyone can read it. So please, help us bump off that Artemia eggs paper off the top position!

Reference

DeLeon H III, Garcia J Jr., Silva DC, Quintanilla O, Faulkes Z, Thomas JM III. Culturing embryonic cells from the parthenogenetic clonal marble crayfish Marmorkrebs Procambarus virginalis Lyko, 2017 (Decapoda: Astacidea: Cambaridae). Journal of Crustacean Biology: in press. https://doi.org/10.1093/jcbiol/ruz063

23 September 2019

Science as a process and an institution

In a response to a poll that showed Canadians’ trust is science might be weakening, Timothy Caulfield tweeted:

Caulfield is technically correct (which, as the saying goes, is the best kind of correct). Science is a process. But this is an overly abstracted view of science – a view taken from 30,000 feet, as it were.

Science, as currently practiced, is done by people, in places, as an industry, by institutions.

Science is a profession (although it is not practiced as a working profession by many people). Most people don’t get to publish scientific papers or make new discoveries.

Science is predominantly carried out in cities in some way.

Science has its own infrastructures of technical supplies and publishing and it creates a product (knowledge distributed in technical papers).

Science is associated with universities and a few businesses.

Saying, “Science is a process” ignores how concentrated the community is and how the practitioners are invisible to a very large section of society. Saying, “Science is a process” ignores that, as currently practiced, science has many characteristics of an industry or institution.

Calling science a process is like calling politics “a process.” Sure, in theory anyone can participate and is participating in politics, but in practice, most politicking is done by professional politicians and civil servants in capital cities participating in government and a few other organizations.

“Politics” as practiced can be seen as isolated and corrupt and untrustworthy because of how it it organized. Same with science.

If we want trust in science, we can’t fall back on these sorts of idealized dictionary definitions of science. We have to embrace the reality of how science is practiced in reality. And the reality is that science can feel closed and confusing and haughty for many who have minimal connections with that community.

Trust in science falling. People seem angry at institutions. But science isn’t a person, a place, an industry, or an institution. Science is a process. Science is a way of understanding the world. If not science, what?

Caulfield is technically correct (which, as the saying goes, is the best kind of correct). Science is a process. But this is an overly abstracted view of science – a view taken from 30,000 feet, as it were.

Science, as currently practiced, is done by people, in places, as an industry, by institutions.

Science is a profession (although it is not practiced as a working profession by many people). Most people don’t get to publish scientific papers or make new discoveries.

Science is predominantly carried out in cities in some way.

Science has its own infrastructures of technical supplies and publishing and it creates a product (knowledge distributed in technical papers).

Science is associated with universities and a few businesses.

Saying, “Science is a process” ignores how concentrated the community is and how the practitioners are invisible to a very large section of society. Saying, “Science is a process” ignores that, as currently practiced, science has many characteristics of an industry or institution.

Calling science a process is like calling politics “a process.” Sure, in theory anyone can participate and is participating in politics, but in practice, most politicking is done by professional politicians and civil servants in capital cities participating in government and a few other organizations.

“Politics” as practiced can be seen as isolated and corrupt and untrustworthy because of how it it organized. Same with science.

If we want trust in science, we can’t fall back on these sorts of idealized dictionary definitions of science. We have to embrace the reality of how science is practiced in reality. And the reality is that science can feel closed and confusing and haughty for many who have minimal connections with that community.

03 September 2019

Tuesday Crustie: Philately

Australia has issued a set of crayfish stamps!

Hat tip to Dr. Crayfish.

External links

Set of Freshwater Crayfish stamps

20 August 2019

Lessons from sport for science

Two of my interests recently intersected on ABC’s The Science Show.

Two of my interests recently intersected on ABC’s The Science Show.As regular readers might know, I have been fascinated with the creation and ongoing development of the women’s competition of Australian Rules Football (AFL). (I am a card-carrying supporter of the Melbourne Football Club’s women’s team!) When I lived in Australia, it was clear that there were women who loved the game, but as spectators. The game was very much seen as being for blokes. I don’t think I ever heard about women playing in the time I was there.

Fast forward to a women’s league that is growing and making international waves, and that is expanding the audience for this sport significantly. The brilliant picture of the atheleticism of Tayla Harris (shown) and her subsequent poor treatment over it made news in the US. That was the first time I think I ever heard AFL on the news since moving here.

Australia’s chief scientist Alan Finkel talks about how this new league teaches us a lot about how creating opportunities makes a difference for people. And that science could learn from this (my emphasis).

So let’s go back and think about women’s AFL in the year 2000. (A year I lived in Australia! - ZF) If you were a schoolgirl in Victoria, you couldn’t play in an AFL competition once you hit the age of 14. Why not? Because there was no competition open to teenage girls. You had to wait until you were 18 to join the senior women’s league, and that league was a community competition, without sponsors, played on the worst sports grounds, in your spare time, at your own expense.

On the other hand, your twin brother with the same innate ability would be nurtured every step of the way. And by the time he turned 18, he could easily be on a cereal box and pulling a six-figure salary. Very few people in the AFL hierarchy seem to regard this as a problem. ...

(N)ow when a teenage girl has a talent for football in 2019, she has got role models on TV, she’s got mentors in her local clubs, she’s got teachers and friends who say it’s okay for a girl to like football. In fact it’s great for a girl to like football. She’s not weird, she's not an alien, she is a star. You can see that virtuous cycle starting to form: the standard of the competition rises, it attracts more women and girls, the standard of the competition rises. And we wonder why it took us so long to see what now seems so obvious: second class status for women in sport is not acceptable.

Second class status for women in science isn’t acceptable, either.

External links

Science should emulate sport in supporting women

This week on The TapRoot podcast...

I had the great fun of talking to Ivan Baxer and Liz Haswell for The TapRoot podcast!

We chatted about my two most recent contributions: a paper on authorship disputes, and my letter to Science about grad programs dropping the Graduate Record Exam (GRE). When I wrote those two articles, I didn’t have any connecting thread between them, but I found one for this roundtable:

(N)othing in academia makes sense except in light of assessment and how awful it is.

(And yes, I’m channeling Theodosius Dobzhansky via Randy Olsen.)

Confession time: I had never listened to Taproot until Ivan contacted me about being on the show. To prepare, I listened to a bunch of episodes. I became increasingly excited about the prospect of being one of the guests. Because The Taproot a damn good podcast. The discussion is great and the production values are excellent.

If you are a scientist, I recommend subscribing to The TapRoot – and not just because I’m on it! It’s on all the usual subscription services.

The recording process was not easy, though. Because I was mostly working at home at the time, we tried a test run of recording using my home wifi. Horrible. Awful delays, choppy audio, and just generally unusable audio.

Then I went to my university and used that wifi. You would think an institutional signal in the middle of summer with low use would be better, but nope. It seemed to be an issue with my particular laptop.

We finally solved the problem by using a LAN cable. I can’t remember the last time I had to use a physical cable to connect to the internet, but the old tech still works!

The screenshot is from audio editor JuniperKiss, who did a great job of making me sound more articulate than I am.

Please give the pod a listen or a read, since there’s a full transcript available!

P.S.—I mentioned in this interview that my department wanted to move away from using the GRE. That was no initiated by me, since I stepped down as our graduate program coordinator a while ago.

Dropping the GRE was the plan. I learned after this episode was recorded that our department’s attempt to drop the GRE as an admissions requirement was blocked by administrators up the chain. I think, but an not sure, that it was the Texas Higher Education Coordinating Board. As I understood it, they wanted data to show that the GRE was not predictive of success in our program.

I was surprised, because there are no shortage of peer-reviewed papers on this, some of which I cited in my #GRExit letter in Science. BUt I maybe should not have been surprised, since the Coordinating Board had required some master’s programs in my university add the GRE a few years ago.

I wonder why there is this desire to keep the GRE at the state level.

P.P.S.—I’m sorry I said “guys” as a generic for people.

External links

Taproot S4E2: The GRExit and how we choose who goes to grad school

Taproot Season 4, Episode 2 transcript

The TapRoot on Stitcher

The TapRoot on iTunes

09 August 2019

05 August 2019

Interstellate goes international

Caitlyn Vander Wheele showcased the latest iteration of her Interstellate magazine project today! It is featured in the French magazine L’ADN, the their theme issue, “Game of Neurones.”

(Americans will not fully appreciate this pun, because Americans say the name of brain cells as “neuron,” with a short “o” – rhymes with “brawn.” Europeans have tended to favour pronouncing the name of brain cells as “neurone,” with a long “o” – rhymes with, yes, “throne.”

I couldn’t be more pleased that somehow, my contribution from Volume 1 snuck into the issue! You can see the abdominal fast flexor motor neurons of Louisiana red swamp crayfish in the upper left.

Merci, L’ADN! Je suis très heureux d’être dans votre magazine!

External links

Interstellate, Volume 1

Interstellate, Volume 2

28 July 2019

26 July 2019

“Follow the rules like everyone else” is not punishment

Because I curate a collection of stings and hoaxes, I have been following the so-called “grievance studies” affair by Helen Pluckrose, James Lindasy, and assistant professor Peter Boghossian (the only academic of the trio). They sent hoax papers to journals. Many people have sent hoax papers to journal (hence my anthology), but Pluckrose and colleagues described it as an experiment and published it.

Inside Higher Education reports:

In other words, “Follow the same rules as everyone else.”

Just by way of comparison, and to give you an idea of what research with humans normally entails, I did an online survey for a couple of research papers (here’s one). That’s less intrusive than what Boghossian and colleagues did. I had to:

So “Take training before you do more research” is what anyone should do.

But some reporting makes it sound like Boghossian is being treated arbitrarily (emphasis added).

My prediction is that this is going to become a talking point in the American culture wars, with some trying to paint Boghossian’s letter as a dire consequence that has a chilling effect on academic freedom, is political correctness gone mad, continue buzzwords until exhausted.

Unfortunately, the language of the letter Boghossian got was pretty severe, which will contribute to the impression that the consequences for Boghossian are bad.

And it is bad, of course. It’s embarrassing to get called out for your actions and told you didn’t do the right thing by this institution and your profession.

But I bet a lot of people wish their punishment for something was a letter saying, “Follow the rules.” I’m sure some teenagers would like that more then being grounded.

Inside Higher Education reports:

Boghossian was ordered last year to take research compliance training; he has not yet done so, the letter states. Because Boghossian has not completed Protection of Human Subjects training, he is forbidden from engaging in research involving human subjects or any other sponsored research.

In other words, “Follow the same rules as everyone else.”

Just by way of comparison, and to give you an idea of what research with humans normally entails, I did an online survey for a couple of research papers (here’s one). That’s less intrusive than what Boghossian and colleagues did. I had to:

- Go through “research with human subjects” training.

- Submit a proposal to an institutional review board and have it approved.

- Include detailed descriptions of the potential benefits and risks to anyone viewing the survey.

So “Take training before you do more research” is what anyone should do.

But some reporting makes it sound like Boghossian is being treated arbitrarily (emphasis added).

- PSU punishes prof who duped academic journal with hoax ‘dog rape’ article

- Portland State bans professor from research for getting ‘grievance studies’ hoaxes published

- Portland State bans ‘grievance studies’ prof from doing research “banned from both human-subjects and sponsored research by the public university”

My prediction is that this is going to become a talking point in the American culture wars, with some trying to paint Boghossian’s letter as a dire consequence that has a chilling effect on academic freedom, is political correctness gone mad, continue buzzwords until exhausted.

Unfortunately, the language of the letter Boghossian got was pretty severe, which will contribute to the impression that the consequences for Boghossian are bad.

And it is bad, of course. It’s embarrassing to get called out for your actions and told you didn’t do the right thing by this institution and your profession.

But I bet a lot of people wish their punishment for something was a letter saying, “Follow the rules.” I’m sure some teenagers would like that more then being grounded.

23 July 2019

The failure of neuroscience education

In every field of science, there are certain basic facts. These are the facts that if you get them wrong, mark you as naïve at best and foolish at worst.

In every field of science, there are certain basic facts. These are the facts that if you get them wrong, mark you as naïve at best and foolish at worst.In chemistry, one of those facts might be that everything is made of atoms.

In astronomy, one of these facts might be that the earth goes around the sun and not the other way round.

In geography, geology, and astronomy, one of those facts might be that the earth is round and not flat.

These basic sorts of facts are often used to assess people’s scientific literacy. We consider it important that people be educated in these.

But neuroscience has failed in conveying its most basic facts. Case in point:

The myth that “We only use ten percent of our brain.”

People believe this. I mean, they really believe it.

I’ve heard multiple people mention it at public scientific lectures. I’ve answered dozens of questions about this on Quora, where some version of it crops up every few days.

And that damn Luc Besson movie didn’t help.

From a neuroscientist’s point of view, saying “We only use ten percent of our brain” is as big an error as

saying, “The earth is flat.”

From a neuroscientist’s point of view, saying “We only use ten percent of our brain” is as big an error as

saying, “The earth is flat.” A few moments of thought should show why it can’t be true. We never hear a physician say things like, “Well, the bullet went through your skull, but luckily, it went through the 90% of you brain you never use.” It has no basis in reality.

If you go to the Society for Neuroscience to see what scientists say about this, you might find their outreach page. There, have to navigate to their “Brain Facts” page (which should be “About Brain Facts”, not the actual landing page for “Brain Facts”), dig down to their “Core concepts” and under “Your complex brain” you can read:

There are around 86 billion neurons in the human brain, all of which are in use.

So the leading professional society for neuroscience counters the 10% brain myth with a sentence fragment that is hard to find and weakly worded.

If I was leading neuroscience education, my goal would be to make, “We use 100% of our brain” the sort of bedrock scientific fact that we expect people should know.

Postscript: The 10% myth probably dates back to 1936, when American writer Lowell Thomas wrote the foreword to one of the best all-time sellers, Dale Carnegie’s How to Win Friends and Influence People.

Thomas was summarizing an idea of psychologist William James: that people have unmet potential. Most of us could learn Russian, but don’t. We could learn to play a musical instrument, but don’t. We could learn how to repair a 1963 MGB sports car, but don’t.

Thomas added a falsely precise percentage: “Professor William James of Harvard used to say that the average man develops only ten per cent of his latent mental ability.” Somewhere along the line, “mental ability” became “brain.” This isn’t surprising, since the notion that “Thoughts come from our brain” is a scientific fact that is widely known. That’s our “Sun is at the center of the solar system” fact.

External links

Do we really only use ten percent of our brain?

“Teach the controversy” image from a super cool T-shirt from Amorphia.

20 July 2019

A sad story about the first moon landing

(This post contains material that some may find distressing; specifically, suicide.)

I’m too young to remember the moon landing.

(Continues below the fold.)

I’m too young to remember the moon landing.

(Continues below the fold.)

19 July 2019

More multimedia: Crustacean pain and nociception talk

Last year, I gave a talk at Northern Vermont University about crustacean pain. It was recorded by the local public access television station, Green Mountain Access TV, and is now up on Vimeo.

Current Topics in Science Series, Zen Faulkes from Green Mountain Access TV on Vimeo.

Big thanks to Leslie Kanat for hosting me and for Green Mountain Access TV for recording it!

Current Topics in Science Series, Zen Faulkes from Green Mountain Access TV on Vimeo.

Big thanks to Leslie Kanat for hosting me and for Green Mountain Access TV for recording it!

Audiopapers

Corina Newsome! This is your fault! You have to go and say:

Corina Newsome! This is your fault! You have to go and say:Can we get scientific journal articles on audiobook? Please?

There is a long thread that follows about possible solutions. But two things emerge:

- Software to read papers aloud automatically doesn’t do a very good job.

- Quite a few people want these.

Following my long standing tradition of, “What the heck, I’ll have a go,” I’d like to present my first audiopaper! It’s a reading of my paper from last year on authorship disputes.

I decided to do this because I wanted to get more mileage out of a mic I’d bought for a podcast interview (forthcoming), and because I still have this discussion in the back of my head.

I often tell students, “Always plot the data”, since different patterns can give same summary stats. How could I help visually impaired students do something similar?

And the answer is that while there have been experiments in sonification of data, it seems to have stayed experimental and never moved into simple practical use. It got me thinking about how little we do for visually impaired researchers.

I picked my authorship disputes paper for a few reasons.

- There are no bothersome figures to worry about describing.

- The topic probably has wider appeal than my data driven papers.

- The paper is open access, so I wouldn’t run afoul of any copyright issues.

- The paper is reasonably short.

I wrote an little into and a little outro. I pulled out my mic, fired up Audacity, and got reading. My first problem was finding a position for the mic where I could still see the computer screen so I could read from my paper.

I broke it into sections (slightly more sections than headings the paper). I think it took between one and two hours to read the whole thing. It’s not quite a single take, but it’s close.

I’ve since figured out that I can probably do longer sessions, because I worked out how to identify sections I want to edit out because I stumbled or mispronounced words. After I screw up a sentence, I snap my fingers three times. This creates three sharp spikes in the playback visualization that is easy to see. That makes it easy to find the mistake, then edit the gaffe and the finger snaps out of the recording.

I learned that it can be surprisingly hard to say “screenplay” correctly. And I curse my past self who wrote tongue twisters like “collaborative creator credit.”

Editing the recording also took about an hour. Besides cutting out my stumbles and finger snaps, I cut out some longer pauses and occasional little background sounds. The recording was a bit quiet, so I increased the gain a few decibels.

Will I do more of these? It completely depends on the response to this experiment. I probably picked my single easiest paper to read and turn into an audio recording. It would only get harder from here. And I have other projects that I should be working on.

If people like this effort, I’ll see about doing more, maybe with better production. (I wanted to put in some music, but that was taking too long for a one off.)

External links

Resolving authorship disputes by mediation and arbitration on Soundcloud

12 June 2019

The final chapter in the UTRGV mascot saga

When UTRGV was forming, I blogged a lot about the choice of a name for a new mascot. What we got was... something that not a lot of people were happy with at first. In the years since, I guess peopel have made peace with it, because I haven’t heard much about the name since then.

Well, we’ve waited four, almost five years for a mascot to go with the name, and today we got it.

As far as I know, he doesn’t have a name. Just “Vaquero.”

The website lists what each feature represents, although I think a lot of this stuff is so far beyond subtle that nobody would ever guess what it is supposed to mean.

Scarf: The scarf features the half-rider logo against an orange background. Traditionally, the scarves were worn to protect against the sun, wind and dirt. Today, the scarf is worn to represent working Vaqueros.

Vest: The vest features the UTRGV Athletics symbol of the “V” on the buttons, which match the symbol on the back of the vest representing school spirit and pride.

Gloves: The gray and orange gloves symbolize strength and power. They represent Vaqueros building the future of the region and Texas.

Shirt: The white shirt represents the beginning of UTRGV, which was built through hard work and determination. (Couldn’t it just represent, I don’t know, cleanliness? - ZF)

Boots: A modern style of the classic cowboy boot, these feature elements that are unique to the region and UTRGV. The blue stitching along the boot represents the flowing Rio Grande River which signifies the ever-changing growth in the region and connects the U.S. to Mexico.

The boot handles showcase three stars. The blue star represents legacy institution UT Brownsville, the green star represents legacy institution UT-Pan American and the orange star represents the union of both to create UTRGV.

I don’t know. I was never a fan of the “Vaquero” name and this does not win me over. I just feel like the guy could do with a shave. I will be interested to see if this re-ignites the debate about the name...

Update: Apparently the mascot’s slogan is “V’s up!” Which doesn’t make any sense! And demonstrates questionable apostrophe usage!

External links

Welcome your Vaquero

Well, we’ve waited four, almost five years for a mascot to go with the name, and today we got it.

As far as I know, he doesn’t have a name. Just “Vaquero.”

The website lists what each feature represents, although I think a lot of this stuff is so far beyond subtle that nobody would ever guess what it is supposed to mean.

Scarf: The scarf features the half-rider logo against an orange background. Traditionally, the scarves were worn to protect against the sun, wind and dirt. Today, the scarf is worn to represent working Vaqueros.

Vest: The vest features the UTRGV Athletics symbol of the “V” on the buttons, which match the symbol on the back of the vest representing school spirit and pride.

Gloves: The gray and orange gloves symbolize strength and power. They represent Vaqueros building the future of the region and Texas.

Shirt: The white shirt represents the beginning of UTRGV, which was built through hard work and determination. (Couldn’t it just represent, I don’t know, cleanliness? - ZF)

Boots: A modern style of the classic cowboy boot, these feature elements that are unique to the region and UTRGV. The blue stitching along the boot represents the flowing Rio Grande River which signifies the ever-changing growth in the region and connects the U.S. to Mexico.

The boot handles showcase three stars. The blue star represents legacy institution UT Brownsville, the green star represents legacy institution UT-Pan American and the orange star represents the union of both to create UTRGV.

I don’t know. I was never a fan of the “Vaquero” name and this does not win me over. I just feel like the guy could do with a shave. I will be interested to see if this re-ignites the debate about the name...

Update: Apparently the mascot’s slogan is “V’s up!” Which doesn’t make any sense! And demonstrates questionable apostrophe usage!

External links

Welcome your Vaquero

11 June 2019

Tuesday Crustie: For your crustacean GIF needs

Been meaning to make GIFs of a sand crab digging, suitable for social media sharing, for a while.

Here’s a serious one.

And here’s a fun one.

Here’s a serious one.

And here’s a fun one.

10 June 2019

Journal shopping in reverse: unethical, impolite, or expected?

A recent article describes a practice unknown to me. Some authors submit papers for review, get positive reviews, then withdraw it if the reviews are positive and try again in another “higher impact” or “better” journal.

A recent article describes a practice unknown to me. Some authors submit papers for review, get positive reviews, then withdraw it if the reviews are positive and try again in another “higher impact” or “better” journal.It is entirely normal for authors to go “journal shopping” when reviews are bad: submit the article,and if the reviewers don’t like it, resubmit it to another. But this is the first time I’d heard of this process going the other way. It would never even occur to me to do this.

Nancy Gough tweeted her agreement with this article, and said that this behaviour was unethical. And she got an earful. Frankly, online reaction to this article seemed to be summed up as, “I know you are, but what am I?”

A lot of the reaction that I saw (though I didn’t see all of it) seemed to be, “Journals exploit us, so we should exploit journals!” or “Journals should pay us for our time.” This seemed to be a directed at for profit publishers, but people seemed to be lumping journals from for profit publishers and non profit journals from scientific societies together.

The “People in glass houses should not throw stones” have a point, but I’m not sure it addresses the actual issue. Publishers didn’t create the norms of refereeing and peer review. That was us, guys. Scientists. We created the idea that there are research communities. We created the idea that reviewing papers is a service to that community.

I don’t know that I would call “withdraw after positive reviews and resubmit to a journal perceived as better” unethical, but I think it’s a dick move.

Like asking someone to a dance and then never dancing with them. Sure, there’s no rules against it, but it’s not too much to expect a little reciprocity. The “Me first, me only” attitude drags.

Since the whole behaviour is “glam humping” and impact factor chasing, this seems a good time to link out to a couple of articles that point out the many ways that impact factor is deeply flawed: here and here.

I’ve written before about grumpiness about peer review being due in part to an eroded sense of research community. I guess people don’t want to see journals as part of the research community, but they are.

Related posts

A sense of community

External links

08 June 2019

Shoot the hostage, preprint edition

It takes a certain kind of academic who refuses to review papers. Not because of lack of expertise, a lack of time, or a conflict of interest, but because you don’t like how other authors have decided to disseminate their results.

This isn’t a new tactic, and I’ve made my thoughts on it known. But this takes review refusal to a new level. This individual isn’t just informing the editor he won’t review, but chases down the authors to tell them how to do their job.

I’m sure the emails are meant as helpful, and may be well crafted and polite. Still. Does advocating for preprints have to be done right then?

I see reviewing as service, as something you do to help make your research community function, and to build trust and reciprocity. I don’t think reviewing as an opportunity to chastise your colleagues for their publication decisions. But I guess some people are unconcerned whether they are seen as “generous” in their community or... something else.

And I am still struggling to work out if there are any conditions where I think it would genuinely be worth it to say refuse to review.

Additional, 9 June 2019: I ran a poll on Twitter. 18% described this as “Collegial peer pressure.” The other 82% percent described it as “Asinine interference.”

Related posts

Shoot the hostage

I’ve been declining reviews for manuscripts that aren’t posted as preprints for the last couple of months (I get about 1-2 requests to review per week). I’ve been emailing the authors for every paper I decline to suggest posting.

This isn’t a new tactic, and I’ve made my thoughts on it known. But this takes review refusal to a new level. This individual isn’t just informing the editor he won’t review, but chases down the authors to tell them how to do their job.

I’m sure the emails are meant as helpful, and may be well crafted and polite. Still. Does advocating for preprints have to be done right then?

I see reviewing as service, as something you do to help make your research community function, and to build trust and reciprocity. I don’t think reviewing as an opportunity to chastise your colleagues for their publication decisions. But I guess some people are unconcerned whether they are seen as “generous” in their community or... something else.

And I am still struggling to work out if there are any conditions where I think it would genuinely be worth it to say refuse to review.

Additional, 9 June 2019: I ran a poll on Twitter. 18% described this as “Collegial peer pressure.” The other 82% percent described it as “Asinine interference.”

Related posts

Shoot the hostage

07 June 2019

Graylists for academic publishing

Lots of academics are upset by bad journals, which are often labelled “predatory.” This is maybe not a great name for them, because it implies people publishing in them are unwilling victims, and we know that a lot are not.

Lots of scientists want guidance about which journals are credible and which are not. And for the last few years, there’s been a lot of interests in lists of journals. Blacklists spell out all the bad journals, whitelists give all the good ones.

The desire for lists might seem strange if you’re looking at the problem from the point of view of an author. You know what journals you read, what journals your colleagues publish in, and so on. But part of the desire for lists comes when you have to evaluate journals as part of looking at someone else’s work, like when you’re on a tenure and promotion committee.

But a new paper shows it ain’t that simple.

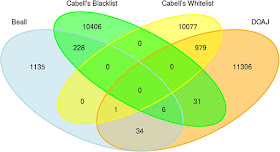

Strinzel and colleagues compared two blacklists and two whitelists, and found some journals appeared on both the lists.

There are some obvious problems with this analysis. “Beall” is Jeffrey Beall’s blacklist, which he no longer maintains, so it is out of date. Beall’s list was also the opinion of just one person. (It’s indicative of the demand for simple lists that one put out by a single person, with little transparency, could gain so much credibility.)

One blacklist and one whitelist are from the same commercial source (Cabell), so they are not independent samples. It would be surprising if the same sources listed a journal on both its whitelist and blacklist!

The paper includes a Venn diagram for publishers, too, which shows similar results (though there is a published on both Cabell’s lists).

This is kind of like I expected. And really, this should be yesterday’s news. Let’s remember the journal Homeopathy is put out by an established, recognized academic publisher (Elsevier), indexed in Web of Science, and indexed PubMed. It’s a bad journal on a nonexistent topic that was somehow “whitelisted” by multiple services that claimed to be vetting what they index.

Academic publishing is a complex field. We should not expect all journals to fall cleanly into two easily recognizable categories of “Good guys” and “Bad guys” – no matter how much we would like it to be that easy.

It’s always surprising to me that academics, who will nuance themselves into oblivion on their own research, so badly want “If / then” binary solutions to publishing and career advancement.

If you’re going to have blacklists and whitelists, you should have graylists, too. There are going to be journals that have some problematic practices but that are put out by people with no ill intent (unlike “predatory” journals which deliberately misrepresent themselves).

Reference

M Strinzel, Severin A, Milzow K, Egger M. 2019. Blacklists and whitelists to tackle predatory publishing: A cross-sectional comparison and thematic analysis. mBio 10(3): e00411-00419. https://doi.org/10.1128/mBio.00411-19.

Related posts

Lots of scientists want guidance about which journals are credible and which are not. And for the last few years, there’s been a lot of interests in lists of journals. Blacklists spell out all the bad journals, whitelists give all the good ones.

The desire for lists might seem strange if you’re looking at the problem from the point of view of an author. You know what journals you read, what journals your colleagues publish in, and so on. But part of the desire for lists comes when you have to evaluate journals as part of looking at someone else’s work, like when you’re on a tenure and promotion committee.

But a new paper shows it ain’t that simple.

Strinzel and colleagues compared two blacklists and two whitelists, and found some journals appeared on both the lists.

There are some obvious problems with this analysis. “Beall” is Jeffrey Beall’s blacklist, which he no longer maintains, so it is out of date. Beall’s list was also the opinion of just one person. (It’s indicative of the demand for simple lists that one put out by a single person, with little transparency, could gain so much credibility.)

One blacklist and one whitelist are from the same commercial source (Cabell), so they are not independent samples. It would be surprising if the same sources listed a journal on both its whitelist and blacklist!

The paper includes a Venn diagram for publishers, too, which shows similar results (though there is a published on both Cabell’s lists).

This is kind of like I expected. And really, this should be yesterday’s news. Let’s remember the journal Homeopathy is put out by an established, recognized academic publisher (Elsevier), indexed in Web of Science, and indexed PubMed. It’s a bad journal on a nonexistent topic that was somehow “whitelisted” by multiple services that claimed to be vetting what they index.

Academic publishing is a complex field. We should not expect all journals to fall cleanly into two easily recognizable categories of “Good guys” and “Bad guys” – no matter how much we would like it to be that easy.

It’s always surprising to me that academics, who will nuance themselves into oblivion on their own research, so badly want “If / then” binary solutions to publishing and career advancement.

If you’re going to have blacklists and whitelists, you should have graylists, too. There are going to be journals that have some problematic practices but that are put out by people with no ill intent (unlike “predatory” journals which deliberately misrepresent themselves).

Reference

M Strinzel, Severin A, Milzow K, Egger M. 2019. Blacklists and whitelists to tackle predatory publishing: A cross-sectional comparison and thematic analysis. mBio 10(3): e00411-00419. https://doi.org/10.1128/mBio.00411-19.

Related posts

06 June 2019

How do you know if the science is good? Wait 50 years

A common question among non-scientists is how to tell what science you can trust. I think the best answer is, unfortunately, the least practical one.

Wait.

Emphasis added. I must have heard Clarke say that on television decades ago, and it stuck with me all this time. I remembered it a little different. I thought it was, “Scientists generally get to the bottom of things in about 50 years, if there’s any bottom to be gotten to.” I finally got around to digging up the exact quote today.

Related quote:

Wait.

There’s one peculiarity that distinguishes parascience from science. In orthodox science, it’s very rare for a controversy to last more than, a generation; 50 years at the outside. Yet this is exactly what’s happened with the paranormal, which is the best possible proof that most of it is rubbish. It never takes that long to establish the facts – when there are some facts.

— Arthur C. Clarke, 10 July 1985, “Strange Powers: The Verdict,” Arthur C. Clarke’s World of Strange Powers

Emphasis added. I must have heard Clarke say that on television decades ago, and it stuck with me all this time. I remembered it a little different. I thought it was, “Scientists generally get to the bottom of things in about 50 years, if there’s any bottom to be gotten to.” I finally got around to digging up the exact quote today.

Related quote:

One of the things that should always be asked about scientific evidence is, how old is it? It’s like wine. If the science about climate change were only a few years old, I’d be a skeptic, too.

— Naomi Oreskes, quoted by Justin Gillis, “Naomi Oreskes, a Lightning Rod in a Changing Climate” 15 June 2015, The New York Times

30 May 2019

“Why do you love monsters?”

My wife asked me, “Why do you love monsters so much?”

My wife asked me, “Why do you love monsters so much?” Maybe because I can’t remember ever a time I didn’t know about Godzilla. The name and image - the glowing fins, the roar, the strange look that was almost a hybrid between a tyrannosaur and a stegosaur – were imprinted on me that early.

The same was true of King Kong, and maybe the Universal monsters (the Karloff Frankenstein, the Lugosi Dracula, and particularly the Creature from the Black Lagoon). You absorb a lot of pop culture that was made before you were born in your early years.

But I certainly didn’t learn about Godzilla from being exposed to the movies, because I remember the first time I actually got to see a Godzilla movie. It was in Killarney, Manitoba, when it still had a sit-down theatre. There’s no mention of that movie theatre online now; I think it was the Roxy? (There was a drive-in, too, and I think it’s still open.)

The theatre showed a lot of re-released low budget movies, ostensibly for a young audience, on weekend nights. I remember watching King Kong Escapes (long before I saw the original classic 1933 King Kong), an early anime called Magic Boy, and the oddly titled Super Stooges vs. the Wonder Women.

One week they showed Godzilla vs. The Thing. I think they had this poster to advertise it.

Needless to say, “The Thing” turned out to be rather different than the poster implied. I was not expecting... a moth.

In retrospect, this was a colossal stroke of luck. I later learned that this was generally considered to be the best Godzilla movie via the cover article in Fangoria #1. (I read and reread that article, thinking of all the Godzilla movies I had yet to see and felt I might never see. You had to work hard to be a nerd in those days.) Even though many more Godzilla films have been released since that Fangoria retrospective, lots of fans still rank Godzilla vs. The Thing among the best.

That good initial experience probably helped cement me as a lifelong Godzilla fan. Even now, I can watch that first movie and appreciate it, albeit on a different level than I could when I was young.

I don’t know if my love for Godzilla would have survived if the first movie I had seen had been something like Godzilla vs. Megalon. Or Son of Godzilla. I think that was the last of the original series I saw, and... that suit. Ugh.

But being a Godzilla fan teaches you to value hope over experience. Because, if we’re being honest, there aren’t that many good Godzilla movies. Even for a Godzilla fan, young or old, some are just utterly tedious.

Being a Godzilla fan has also taught me to bide your time. Because so many of the Godzilla movies were bad, they always had a reputation as being “cheap Japanese monster movies” that were easily dismissed. But guess what? The stuff that was derided for years finally earned some respect.

People started to realize just how hard it was to create those special effects. I look at the final monster battle in Destroy All Monsters, knowing what I know now, and am in awe. How they got all those suit actors, and wire controlling monster parts, on film at all amazes me.

People began to write about how great the music of Akira Ifukube was. Composer Bear McReary noted that the only real competitor that Ifukube’s Godzilla theme has for longevity is the James Bond theme – and the Godzilla theme is older!

The original 1954 version of Godzilla got an art house run, with all the additional American footage with Raymond Burr removed. Key shots were returned and gone was the so-so dubbing, replaced with subtitles. It was a revelation. No longer was the original Godzilla a cheap Japanese monster movie. It was a haunting classic that evoked the horror of an atomic bomb attack.

I hope the new Godzilla movie I am going to see tonight is good. But as a fan, whether it’s good or bad as a whole is almost beside the point. There will probably be some moments, images, that linger on and impress you even if the movie as a whole doesn’t.

And maybe there will be some more kids who will grow up never remembering how or when they first learned about Godzilla.

P.S.—I bought the T-shirt pictured above last year, and I never wore it. I saved it specifically to wear to the opening night of Godzilla: King of the Monsters. That night is tonight! IMAX presentation and I’m very excited!

That was close

There are many days where I am good at my job. The last weeks of the last semester were not among those days. And I say this with a couple of weeks having passed since the end of the semester.

There are many days where I am good at my job. The last weeks of the last semester were not among those days. And I say this with a couple of weeks having passed since the end of the semester. I have a few semesters under my belt now, and I usually aim to have grading all done and posted several days before grades are due. It give students a chance to review their grades, check for any last minute corrections (which happen regularly, when there are hundreds of students).

This semester... just did not work like that.

This biggest problem was that I ran into unanticipated problems with one of my online courses. While the regular course is online, the final exam is in person to maintain the integrity of the class. (One of my colleagues was more blunt. “Because they are cheating [profanity].”)

So I booked computer labs, multiple sessions, to administer proctored exams to the students.

Except that the rooms were not exactly as advertised. Some computers flat out wouldn’t work; students could not log into them at all. Some computers crashed repeatedly, and logged students out of the exam – which, because of exam security, would mean the student would have to start the entire exam again from scratch.

These problems would turn, say, a 25 person computer lab into something more like a 20 person lab. I did not anticipate that. I had to figure out some other arrangements for people who were taking the exam on the last scheduled day. I had people taking exam for days after I thought I would have them all in and would just be grading.

I barely got the grades in on time. I wasn’t able to communicate with students as I wanted. It was very stressful.

And the moral of the story is: Don’t book computer labs to capacity. Leave a few seats empty to act as back ups.

26 May 2019

The future of education isn’t online

There is a certain class of people who are convinced that higher education as currently taught is a stupid waste of time, and that the future is to move instruction on to the Internet. I see a lot of questions on Quora asking when this will happen.

I think the notion of online learning is appealing for a certain kind of person: technologically savvy and probably rather introverted. I’m one of these people.

But most students are not like that. Most people learn best with face to face interactions instructors.

I am reminded of this by seeing this Twitter thread about Virginia Tech’s Math Emporium, which is basically an online class. Students get a computer lab and no professors.

And students hate it. I think my favourite burn is one studentwho wrote:

Even a MOOC company acknowledged that MOOCs have failed to disrupt education (they called them “dead”) in the way some people were talking.

More data shows MOOCs consistently underperform.

I teach some classes online, and I think you can create a good learning environment for some students. But this vision that the future of education is a bunch of YouTube videos and adaptive algorithms is not a vision that I want to see.

Update, 30 May 2019: A new report on online education adds more evidence saying they aren’t better, although this one is focused more on K-12 than higher education.

I think the notion of online learning is appealing for a certain kind of person: technologically savvy and probably rather introverted. I’m one of these people.

But most students are not like that. Most people learn best with face to face interactions instructors.

I am reminded of this by seeing this Twitter thread about Virginia Tech’s Math Emporium, which is basically an online class. Students get a computer lab and no professors.

And students hate it. I think my favourite burn is one studentwho wrote:

I would call this place hell on Earth but I don’t want to insult hell.

Even a MOOC company acknowledged that MOOCs have failed to disrupt education (they called them “dead”) in the way some people were talking.

More data shows MOOCs consistently underperform.

The vast majority of massive open online course (MOOC) learners never return after their first year, the growth in MOOC participation has been concentrated almost entirely in the world's most affluent countries, and the bane of MOOCs — low completion rates — has not improved over 6 years.

I teach some classes online, and I think you can create a good learning environment for some students. But this vision that the future of education is a bunch of YouTube videos and adaptive algorithms is not a vision that I want to see.

Update, 30 May 2019: A new report on online education adds more evidence saying they aren’t better, although this one is focused more on K-12 than higher education.

Full-time virtual and blended schools consistently fail to perform as well as district public schools.

11 May 2019

A pre-print experiment, part 3: Someone did notice

In 2016, I wrote a grumpy blog post about my worries that posting preprints is probably strongly subject to the Matthew effect. It was a reaction to Twitter anecdotes about researchers (usually famous) posting preprints and immediately getting lots of attention and feedback on their work. I wanted to see if someone less famous (i.e., me) could get attention for a preprint without personally spruiking it extensively on social media.

I felt my preprint was ignored (until I wrote aforementioned grumpy blog post). But here we are a few years later, and I’m re-evaluating that conclusion.

A new article about bioRxiv is out (Abdill and Blekman 2019), and it includes Rxivist, a website that tracks data about manuscripts in bioRvix. Having posted a paper in BioRvix, that means that my paper is tracked in Rxivist.

It’s always interesting to be a data point in someone else’s paper.

The search function is a little wonky,but I did find my paper, and was surprised (click to enlarge).

Rxivist showed that there has been a small but consistent number of downloads (Downloaded 421 times). Not only that, but the paper is faring pretty well compared to others on the site.

I did not expect that. Not at all.

I know there is an initial spike because I wrote my grumpy blog post and did an interview about preprints that got some attention, but even so. I know there aren’t hundreds of people doing research on sand crabs around the word, so hundreds of downloads is a much wider reach than I expected.

And some of the biggest months (October 2018) are after the final, official paper was published in Journal of Coastal Research. The final paper is open access on the journal website, too, so it’s not as though people are downloading the preprint because they are circimventing paywalls. (Though in researching this blog post, I learned a secondary site, BioOne, is not treating the paper as open access. Sigh.) (Update, 14 May 2019: BioOne fixed the open access problem!)

I am feeling much better about those numbers now than in the first few months after I posted the paper. I never would have anticipated that long tail of downloads years after the final paper is out.

And Rxivist certainly does a better job of providing metrics than the journal article does:

There’s an Altmetric score but nothing else. It’s nice that the Altmetric score for the preprint and published paper are directly comparable (and I’m happy to see the score of 24 for the paper is a little higher than the preprint at 13!), but I miss the data that Rxivist provides.

Other journals provide top 10 lists (and I’ve been happy to be on those a couple of times), but they tend to be very minimal. You often don’t know the underlying formula for how they generate those lists. The Journal of Coastal Research has a top 50 articles page that shows raw download numbers for those articles, and if you are not in that list, you have no idea how your article is doing.

While I still never got any feedback on my article before publication, I don’t feel like posting that preprint was a waste of time like I once did.

References

Abdill RJ, Blekhman R. 2019. Tracking the popularity and outcomes of all bioRxiv preprints. eLife 8: e45133. https://doi.org/10.7554/eLife.45133

Faulkes Z. 2016. The long-term sand crab study: phenology, geographic size variation, and a rare new colour morph in Lepidopa benedicti (Decapoda: Albuneidae). BioRXiv https://doi.org/10.1101/041376

Faulkes Z. 2017. The phenology of sand crabs, Lepidopa benedicti (Decapoda: Albuneidae). Journal of Coastal Research 33(5): 1095-1101. https://doi.org/10.2112/JCOASTRES-D-16-00125.1 (BioOne site is paywalled; open access at https://www.jcronline.org/doi/full/10.2112/JCOASTRES-D-16-00125.1)

Related posts

External links

Sand crab paper on Rxivist

I felt my preprint was ignored (until I wrote aforementioned grumpy blog post). But here we are a few years later, and I’m re-evaluating that conclusion.

A new article about bioRxiv is out (Abdill and Blekman 2019), and it includes Rxivist, a website that tracks data about manuscripts in bioRvix. Having posted a paper in BioRvix, that means that my paper is tracked in Rxivist.

It’s always interesting to be a data point in someone else’s paper.

The search function is a little wonky,but I did find my paper, and was surprised (click to enlarge).

Rxivist showed that there has been a small but consistent number of downloads (Downloaded 421 times). Not only that, but the paper is faring pretty well compared to others on the site.

- Download rankings, all-time:

- Site-wide: 17,413 out of 49,290

- In ecology: 542 out of 2,046

- Since beginning of last month:

- Site-wide: 19,899 out of 49,290

I did not expect that. Not at all.

I know there is an initial spike because I wrote my grumpy blog post and did an interview about preprints that got some attention, but even so. I know there aren’t hundreds of people doing research on sand crabs around the word, so hundreds of downloads is a much wider reach than I expected.

And some of the biggest months (October 2018) are after the final, official paper was published in Journal of Coastal Research. The final paper is open access on the journal website, too, so it’s not as though people are downloading the preprint because they are circimventing paywalls. (Though in researching this blog post, I learned a secondary site, BioOne, is not treating the paper as open access. Sigh.) (Update, 14 May 2019: BioOne fixed the open access problem!)

I am feeling much better about those numbers now than in the first few months after I posted the paper. I never would have anticipated that long tail of downloads years after the final paper is out.

And Rxivist certainly does a better job of providing metrics than the journal article does:

There’s an Altmetric score but nothing else. It’s nice that the Altmetric score for the preprint and published paper are directly comparable (and I’m happy to see the score of 24 for the paper is a little higher than the preprint at 13!), but I miss the data that Rxivist provides.

Other journals provide top 10 lists (and I’ve been happy to be on those a couple of times), but they tend to be very minimal. You often don’t know the underlying formula for how they generate those lists. The Journal of Coastal Research has a top 50 articles page that shows raw download numbers for those articles, and if you are not in that list, you have no idea how your article is doing.

While I still never got any feedback on my article before publication, I don’t feel like posting that preprint was a waste of time like I once did.

References

Abdill RJ, Blekhman R. 2019. Tracking the popularity and outcomes of all bioRxiv preprints. eLife 8: e45133. https://doi.org/10.7554/eLife.45133

Faulkes Z. 2016. The long-term sand crab study: phenology, geographic size variation, and a rare new colour morph in Lepidopa benedicti (Decapoda: Albuneidae). BioRXiv https://doi.org/10.1101/041376

Faulkes Z. 2017. The phenology of sand crabs, Lepidopa benedicti (Decapoda: Albuneidae). Journal of Coastal Research 33(5): 1095-1101. https://doi.org/10.2112/JCOASTRES-D-16-00125.1 (

Related posts

External links

Sand crab paper on Rxivist

10 May 2019

Reliable shortcuts

There’s an old saying that if you build a better mousetrap, the world will beat a path to your door.

I think that’s true of shortcuts, not mousetraps.

Everyone wants reliable shortcuts. They don’t want to have to assess every available option every single time.

Best seller lists, Consumer Reports, “Two thumbs up!” from Siskel and Ebert, “Certified fresh” on Rotten Tomatoes, “People also shopped for” on Amazon, “Five stars” on Yelp!, and awards shows are all efforts to create shortcuts.

An amazing number of arguments in academia are about shortcuts. Almost every debate about tenure and promotion and assessments of academics I have seen or read is about shortcuts. Arguments about the GRE are about shortcuts.

I got thinking about shortcuts because of this article on what journals go into PubMed.

Rebecca Burdine is among the concerned, because she advised people to use PubMed as a shortcut.

Stephen Floor thinks the problem is even wider:

But again, “peer reviewed” is a shortcut. Anyone who’s been in scientific publishing for a while knows that assessing scientific evidence is messy and complicated. Every working scientist has their own “That should never have gotten past peer review!” story.

We will never, ever get rid of shortcuts. People crave certainty and simple decision making rules. But we should talk about using shortcuts in science in realistic ways.

It is not reasonable to expect any shortcut to be perfectly reliable all the time. Don’t ask, “Which shortcut is better?” but “How can I use a few different shortcuts?”

Unfortunately, scientists who understand the nuances of a situation often do a shoddy job of conveying that nuance. Or maybe they just get tired of being pressed for shortcuts. So we have kind of brought this on ourselves.

External links

Academics Raise Concerns About Predatory Journals on PubMed

I think that’s true of shortcuts, not mousetraps.

Everyone wants reliable shortcuts. They don’t want to have to assess every available option every single time.

Best seller lists, Consumer Reports, “Two thumbs up!” from Siskel and Ebert, “Certified fresh” on Rotten Tomatoes, “People also shopped for” on Amazon, “Five stars” on Yelp!, and awards shows are all efforts to create shortcuts.

An amazing number of arguments in academia are about shortcuts. Almost every debate about tenure and promotion and assessments of academics I have seen or read is about shortcuts. Arguments about the GRE are about shortcuts.

I got thinking about shortcuts because of this article on what journals go into PubMed.

For some members of the scientific community, the presence of predatory journals, publications that tend to churn out low-quality content and engage in unethical publishing practices—has been a pressing concern.

Rebecca Burdine is among the concerned, because she advised people to use PubMed as a shortcut.

I could tell parents “researching” their rare disease of interest that if it wasn’t on PubMed, then it shouldn’t be given lots of weight as a source.

Stephen Floor thinks the problem is even wider:

This has also contributed to the undermining of “peer reviewed” as a measure of validity.

But again, “peer reviewed” is a shortcut. Anyone who’s been in scientific publishing for a while knows that assessing scientific evidence is messy and complicated. Every working scientist has their own “That should never have gotten past peer review!” story.